A new study, published in PNAS, finds that quantitative research in psychology and the social sciences is “worryingly imprecise” and that generalizations based on this data may be flawed and misleading. The researchers, led by Aaron J. Fisher at the University of California, Berkeley, used a complex statistical analysis to compare group differences with individual correlations.

“We provide evidence that conclusions drawn from aggregated data may be worryingly imprecise,” Fisher writes. “Specifically, the variance in individuals is up to four times larger than in groups.”

The usual approach in quantitative research examines large-scale group differences, comparing the means (averages) between large groups. Often, the data appears to clump together around the mean, which seems to indicate that most people in the groups have a similar response.

However, Fisher’s research found that when individual-level data is examined, this clumping effect dissipates, and the correlations found are much less significant.

Fisher provides the example of a study examining typing speed and number of typos made. At the group level, the finding is that the faster the typing speed, the fewer typos were made. That happens because the best typists were both faster and more accurate. Thus, the conclusion could have been that one should type faster because then one is less likely to make a typo. However, this is obviously false. Instead, on an individual level, the faster any one person types (no matter their skill level), the more typos they will make. When individual-level data is considered, the conclusion reached is the exact opposite of the group-level correlation.

Fisher argues that most quantitative research in the social sciences falls prey to this type of error (called the ecological fallacy, or Simpson’s paradox, with slightly different connotations). According to Fisher, these findings “threaten the veracity of countless studies, conclusions, and best-practice recommendations.”

“Even in the best-case scenario, we should not think of a correlation in group data as an estimate that generalizes to any given individual in the population. Stated bluntly, this implies that the temptation to use aggregate estimates to draw inferences at the basic unit of social and psychological organization—the person—is far less accurate or valid than it may appear in the literature.”

In the current study, Fisher and his co-authors examined the data from seven previous studies in the mental health field, using a complex regression analysis to compare the interindividual results (the group findings) with the intraindividual results (the data for the individuals in the study).

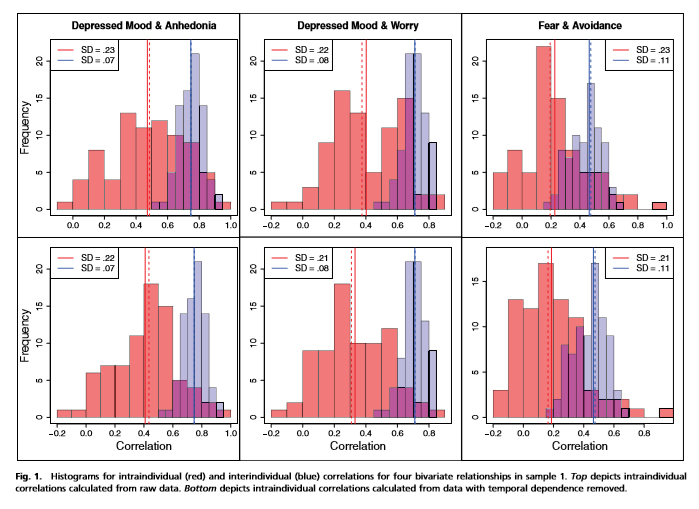

The following graphs, from one of the studies about depression, show that the group data (in blue) clustered at a high correlation, indicating that—for instance—depressed mood and anhedonia (inability to experience pleasure) were usually found together. However, the individual data (in red) is spread much more widely, and its average point is at a much lower correlation—indicating that there is a great deal of variance in whether these two traits are actually found together.

So what is the impact of this finding? Well, Fisher and his colleagues suggest that many of the theories about mental health, and the treatments designed to improve mental health, are based on these aggregate findings. If the individual variance belies this finding, then the theories and treatments may be flawed.

The researchers provide the following example taken from one of the studies in their analysis: the gold-standard therapy for phobia anxiety, exposure, is based on the finding that, in general, anxiety and avoidance are highly correlated. However, on an individual level, there is a broad range of variation falling along a spectrum, from individuals who show very little avoidance when they are anxious, to individuals who have a medium amount, to individuals who are very avoidant when anxious. Thus, although the average finding supports the use of anti-avoidance therapies, such as exposure, the individual finding certainly does not support that theory as a universal truth.

Fisher and his colleagues suggest that future research needs to use these analytic techniques to determine if the individual results support or belie the general findings, before concluding that the findings could be applied to individuals.

****

Fisher, A. J., Medaglia, J. D., Jeronimus, B. F. (2018) Lack of group-to-individual generalizability is a threat to human subjects research. PNAS, 115(27), E6106–E6115. www.pnas.org/cgi/doi/10.1073/pnas.1711978115 (Link)

“A doctor would have been interviewed by police for rape and child cruelty over allegations a “truth drug” was used to carry out abuse at a hospital.

The late Dr Kenneth Milner ran Aston Hall psychiatric hospital in Derbyshire from 1947 to the 1970s, which former patients described as “pure hell”.

https://www.bbc.com/news/uk-england-derbyshire-44943056

Ofcourse the ‘powers that be’ knew about this a long long time ago.

Abuse still going on BBC – time to investigate other psych ‘hospitals’ of people knowingly killed by psych drugs !

ALL the psychiatrists know how bad these drugs are. The patients make a complaint and are told they lack insight and the complaint is verification of the ‘illness’ and they get away with the abuse. I’m talking about the GMC here.

Report comment

In other words, everyone is an individual and what an individual needs or wants in order to move forward will be, well, gosh, INDIVIDUAL! This certainly supports my contention that the first rule of quality therapy, or any helping effort, is HUMILITY! What we know about groups is, at best, a probability distribution. Trying to apply the same approach to everyone based on a “diagnosis” or even a similar set of “symptoms” is both dangerous and foolish. Which kinda undermines the entire mental health system, doesn’t it?

Report comment

Yeah, Steve, too bad that “undermining” is only theoretical and metaphorical….

BTW, “pre-emptive moderation” I understand. It sucks, but what can I do about it?

But what’s with this DELAYED pre-emptive moderation.

No, I do not think it’s at all unreasonable to expect my comments to be approved within a few short hours.

I see several comments that are days old, and STILL “awaiting moderation”….

And you have so far FAILED to answer several questions I asked you, relevant to “comment moderation”.

What’s your problem, Steve?

~Bradford

Report comment