I didn’t use the word statistical in the title because some of you might have backed away immediately. I am introducing several pieces with this one about measurement and numbers in mental health research and practice, because of a scientific sheen they lend to the so-called ‘evidence-based’ movement currently in vogue – which makes it seem somehow irrefutable. After all, who wants to argue with using ‘evidence’ when we set out to work on or solve life problems?

But what are the philosophical underpinnings for what constitutes evidence and how have quantitative approaches so effectively trumped qualitative approaches in applied psychiatry, psychology, and the like? Furthermore, is it possible that quantitative ways of studying human experience may actually promote constricted, myopic views that hurt or oppress human beings?

In future pieces, I’m going to aim my analysis to the Western mental health movement’s entry into indigenous communities, but the points I attempt to make here are intended to pertain to all people.

I’m into how history affects us now so I ask, what historical factors lead us to believe we can quantify the experience of people?

When I think about that question, I remember my terrifyingly-brilliant experimental psychology professor in my first year of graduate school. I’d gotten off to a bad start with her by pointing out she had a black mark on her forehead on Ash Wednesday. Having revealed my utter ignorance of all things Catholic, she brought forth her Jesuit-informed wrath by assigning our class a succession of four ‘quizzes’ to complete by next class period. We were usually assigned but one.

These ‘quizzes’ were a kind of intellectual waterboarding with multiple essay questions like this: ‘Compare and contrast E.G. Boring’s theories regarding figure ambiguity with those of George Elias Muller.” Most of us were struck by Dr. Boring’s unfortunate moniker. There was only one way to complete four of these, and that was to work together in groups night and day until next class period. My fellow students were not pleased at all with me.

And yet I’ll bet most of them still remember the Weber-Fechner Law.

Developed by German 19th century philosopher-psychologists, Ernst Heinrich Weber and Gustav Fechner, this law states that a perceived ‘just noticeable difference’ (j.n.d.) between two sequential stimuli can be scaled logarithmically in relation to the comparative physical magnitude or amplitude of each presented stimulus.

Huh?

Let me try again: “Subjective sensation is proportional to the logarithm of stimulus intensity.”1

I do apologize. A demonstration might help — it takes less of an incremental change in the volume intensity of your music listening device when it’s initially at low volume for you to ‘just notice a difference’ than when you turn it up at a higher volume. This exponential ratio can be scaled.

Well, that may seem a factoid useless to all but audio engineers and disc jockeys. Besides, it turns out that this mathematical relationship doesn’t really hold together at high levels of stimulus intensity.

I’m only mentioning it at all because early psychophysical discoveries like the Weber-Fechner Law were revolutionary in suggesting that Western scientists could quantify human subjective perception. Prior to that, nobody considered such a possibility. As with many a revolutionary idea, what made a lot of sense to apply in experimental psychology gradually devolved into the quantification of all sorts of subjective experience across the entire field of psychology.

This quantification of subjective inquiry got linked in with what is known as the phenomenological approach to studying internal experience and consciousness. I could mention Wilhelm Wundt, Husserl, Freud, Jung, Sartre, and all sorts of other seminal white guys in regards to that stuff, but I believe Weber and Fechner deserve much of the credit for the quantification of subjectivity.

Most psychological tests and procedures used today can be considered phenomenological methods and are philosophically tied to self-report and subjectivity. Even the psychologist as a trained observer works phenomenologically.

Some of us may be plagued by the philosophical debate as to whether what we call ‘reality’ should be based on our ‘sense impressions’ (empiricism) or upon the internal organizing and conceptualizing process of sense impressions as ‘phenomena’ within the mind (phenomenalism). Others of us may be busy texting about Kim Kardashian’s latest pose. I’m not making value judgments.

As for me, I’ve subscribed to the phenomenalist party for many years, and you may note how this philosophy clashes quite readily with today’s current fad – so-called ‘evidence-based practice.’ Yet it’s my perspective that ‘evidence-based practice’ in mental health studiously disregards its own roots in phenomenology.

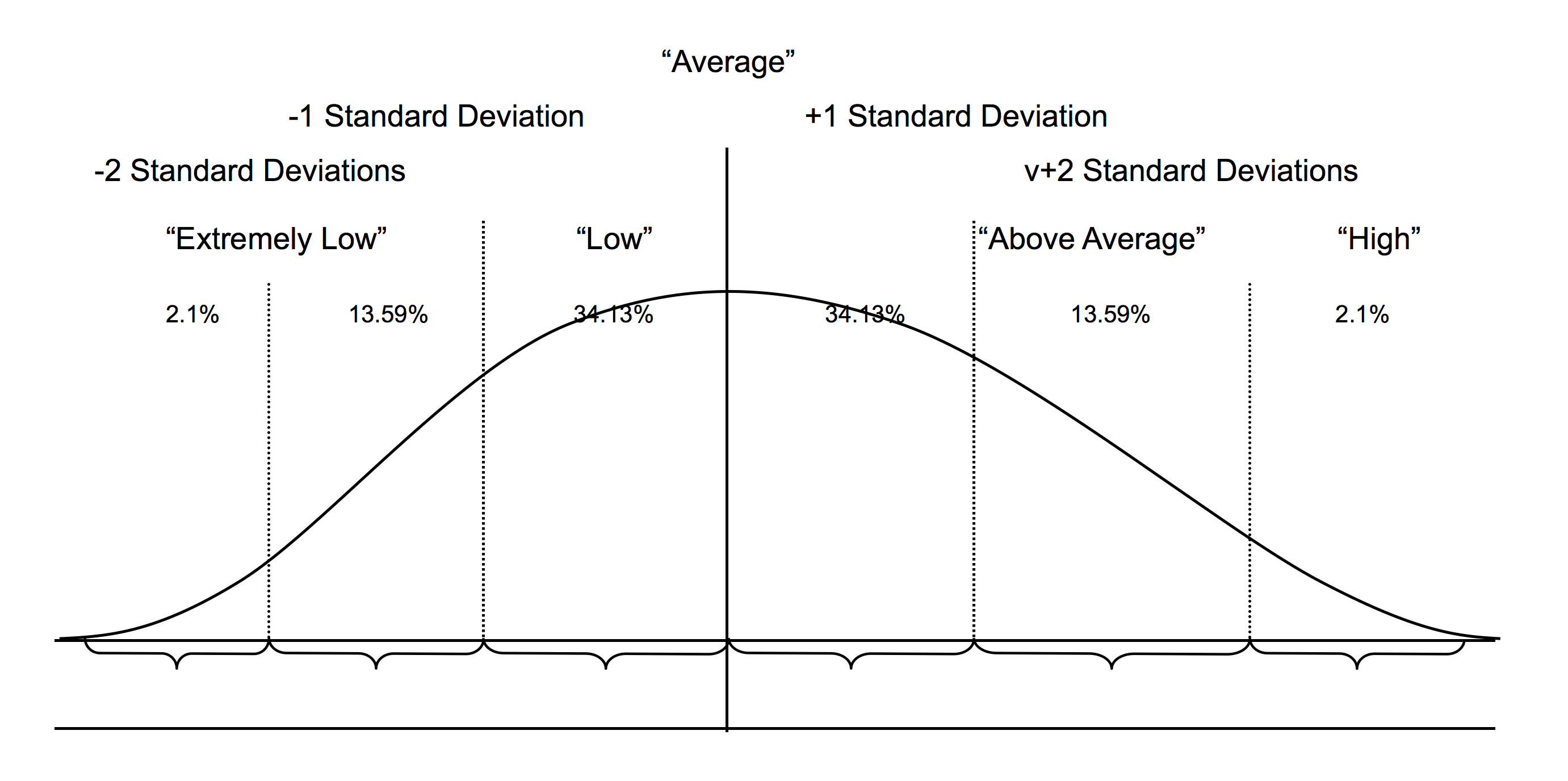

Are you still there? I’m changing the subject to the bell shaped curve. It’s also called the normal curve, or Gaussian curve, and it’s a big part of the extension of the phenomenological approach into quantitative research in psychology and psychiatry. If you don’t remember or never saw it before, the bell curve looks like this:

This statistically-derived holy grail of phenomenology has deeply affected the lives of human beings all over the world. It has served as the Delphi Oracle of normality and abnormality in many facets of Western mental health practice. It is the nucleus of the ‘evidence’ of the ‘evidence-based practice’ of which we speak in mental health research.

And who brought it to us? Galileo.

Huh?

Well, he was the one to first notice that errors in telescopic observations vary in a predictable manner around the correct observation. Super-bright French white-guy scholars like Abraham de Moivre, Pierre Simon-Laplace, and Lambert Adolph Jacque Quetelet blew people’s minds at the British Royal Society when they were able to mathematically formulate these error deviations and apply them to other branches of physics and astronomy. And Quetelet even started looking into criminality and developed certain early actuarial approaches suggesting that the bell-shaped curve could tell us some things about human beings.

Woh! And we were pretty okay for a while using the bell curve in regards to height, weight, and certain other simple human characteristics. Then along came Sir Francis Galton, his biographer and protégé, mathematical genius Karl Pearson, and the international eugenics movement. More about that in an upcoming piece.

The bell-shaped curve idea is the much generalized tool for demonstrating that most people exhibit a middling amount of a particular characteristic or quality, while some people have less, some more, some have very little, and some have a great deal. No rocket science there, in my opinion.

But we assume this ‘tendency’ to be true across many socially-constructed characteristics in mental health by relying on sometimes complex information-gathering tools like psychological tests and measures and large-frame research designs. It’s still very important to remind you that every bit of that work basically boils down to what people report about themselves, how they react, or how they answer questions compared to other people. We use a quantitative phenomenological approach to construct what is considered normative.

One significant and even scary assumption therein is that findings from a group of people can be applied to understanding and evaluating an individual group member as long as they were derived using adequate criteria for being statistically ordered on a bell curve (a random, representative research sample of a population is one well-known criteria).

More cocktail party words for you: the nomothetic versus idiographic debate. I don’t drink (alcohol) but these are the kinds of issues to bring up if you feel intoxicated people are crowding you. This is a philosophical debate that has never really been resolved and can be summed up with the question, can or should an individual be meaningfully compared to a normative group? Unfortunately, this ‘debate’ has become more invisible than ever under our current mental health technocracy.

If you want to know more it click here (thanks, Mr. Crane, International School of Prague). What you’re going to notice on his table behind the click is that the DSM and other diagnostic systems like ICD fit under the nomothetic approach. That’s true.

I’m strongly allied with an idiographic stance. I expand my idiographic views to getting to know unique communities in-depth (including indigenous communities). I do read normative research but don’t consider it directly applicable to unique individuals or communities in any particular way.

I take an idiographic position due to my belief that it’s more conservative ethically because I believe nomothetic categories in clinical psychology are generally socially-contrived, faddish, culturally-nested, and/or pseudo-scientific, This is especially true regarding any ‘normative research’ derived using DSM or ICD categories. That’s why I consider the entire field of so-called ‘abnormal psychology’ to be mostly rubbish.

I’m preoccupied with the moral implications for personal liberty and freedom in imposing a statistical derivation pertaining to a group of people (bell curve) on to the lived experience of a unique and autonomous individual. I fundamentally disbelieve that group generalizations in psychology (at least) can be applied to individuals. Refer back to my phenomenalistic orientation to understand more.

I also don’t accept the idea that characteristics of a ‘random, representative sample’ from a society I view as massively dysfunctional should be used to define normality for any particular individual citizen.

At the risk of entirely monopolizing your time (well, maybe you’re on a long bus or subway ride or I’ve distracted you from less important things), I’d like to offer for your consideration (á la Rod Serling) the broadly-accepted ‘mental health disorder’ concept of depression.

We all know what depression is, right?

It’s that very popular cultural word for describing a chronic negative emotional state in somebody. Depression is also a ‘major health care target’ of the Western world with its innumerable medication ad campaigns, new-fangled breakthrough psychotherapies, self-help books, webpages, blogs, magazine articles, and media guru talk shows.

And depression is the prolonged experience of sadness, right? Well, maybe it’s more than that. Okay, it’s chronic sadness and a sense of futility and self-isolation all combined, right? There’s also loss of productivity, disruption in activities of daily living, and poor social skills. I too suffer from poor social skills.

And people get sad the world over in the same way, correct? Hey, what about the existential issues pertaining to ‘what is my purpose’ and ‘why is there so much suffering in life’? Do we just relapse to unfiltered cigs and read Sartre? What about those religious and spiritual concerns in regards to depression? Watch Bells of St. Mary’s? (At 43:55: “It’s like a world inside us and it’s up to us what we make of it.”)

I mean, depression is not grief exactly, that’s different. Well, not in DSM-5, really. Let’s just agree that depression could start with grief, but then the person carries on with it for much too long.

Dave, you say, when you see depression, you’ll definitely know it. We all know what we mean when we say depression.

Well, maybe we don’t. At least, there seems to be a lot of room for individual variance there. Could it be that understanding depression means taking an idiographic stance and asking the depressed person what he or she means?

No, instead, let’s make the American Psychiatric Association the final arbiter of what is meant by depression.

And here’s the plot twist: What might it mean if people suffering from oppression are relabeled2 by a global biopharmaceutical research enterprise as instead suffering from depression?

It means that the political and social perpetration of oppression gets obscured, legitimate and understandable reactions are reframed as individual pathology, and mental and emotional affliction directly traceable to the social problems of racism, poverty, displacement, intolerance, war, and hatred are ascribed to deficiencies and impairments of the victims themselves.

Over the next few posts, I’ll be pulling together more specifics for you to delve into regarding how the bell curve, the nomothetic approach, and other quantitative methods inside the Western mental health movement have been used historically and contemporarily to oppress and disempower indigenous people.

* * * * *

References:

1See http://en.wikipedia.org/wiki/Weber%E2%80%93Fechner_law

2See, for example, http://www.uihi.org/wp-content/uploads/2012/08/Depression-Environmental-Scan_All-Sections_2012-08-21_ES_FINAL.pdf

Absolutely superb David.

That second last paragraph is about as powerful as anything I have read.

Look forward to more.

Regards

Boans

Report comment

Thanks for your comment, Boans. I suppose that 2nd to last paragraph summarizes why I have felt a sense of mission trying to write and speak out. I’m hoping other people will too.

Report comment

I don’t know, it leaves things at a vague point if you ask me. What _might_ it mean ?

What’s the flip side ? That sometimes a person has to deal with dissatisfaction in life ? That not every expectation a person has is reasonable ? That sometimes you might just be worse off comparative to someone else and there’s no one to blame for that or you just have to get over it.

Report comment

Great stuff, David. And I agree with Boans about the power of your second last paragraph.

Report comment

Thanks David. I’m very glad to know you and be engaged with the wonderful work you’re doing at Sharing Culture. Thanks also for inviting me to post a piece on the Sharing Culture site too, which I’ll take the liberty of linking here– http://www.sharingculture.info/5/post/2015/01/guest-blog-a-critical-analysis-of-sharing-culture-by-david-walker-phd.html

Report comment

Lovely essay on how mass brainwashing works, generationally, leading to utter chaos and confusion. It’s up to each individual to wake up to their own truth, above and beyond whatever ‘society’ dictates. We are individuals, first, each of us with our own path, our own truth, our own spirit. Thanks for the clarity, here.

Report comment

Thanks, Alex, I appreciate your comment and agree with you.

Report comment

It all rests on the assumption that deviation from “the norm” is de facto always bad. If that assumption goes away, the whole argument is ditched.

I also think it’s fascinating (in an aftermath of a train wreck sort of way) to see how certain bell curves are only considered “abnormal” at one end. Does no one notice, for instance, that there is no “Hypoactivity disorder?” Why would that be, if deviation from the norm is bad? It’s clearly because low activity doesn’t cause problems for teachers and other adults caring for the child. I think it would be a lot more honest to call it “Adult Annoyance Disorder,” because it’s clearly based on how annoying the kid is, rather than his/her activity level per se. And that would also make it clear that it’s the adults’ needs that are at issue here, not the child’s.

Anyway, I’m happy to have such a clear elucidation of why using a bell curve to define “disease states” is both stupid and unproductive, unless you’re interested in profits and/or social domination. Not everyone is “normal,” or needs to be. Otherwise, we’d have to drug all of our creative geniuses into an inactive stupor. But wait a minute… that IS what we’re doing, isn’t it?

—- Steve

Report comment

“That IS what we’re doing, isn’t it?” Yes it is. When a child surprises a school social worker by getting 100% on his state standardized tests in the eigth grade. The mother gets a call from said school social worker, not congratulating her for her son’s success, but instead accusing the mother of “keeping your child up late nights studying, and pushing him too hard.”

But I was a “mean mom” who had my children in bed by 9 every night, and my son was constantly playing World of Warcraft because he never had any homework. My child had a “genetic” history of high IQ’s, however.

The psychiatric industry’s desire to stigmatize and tranquilize all outside the bell curve, even the creative and analytical “geniuses,” is counterproductive to the betterment of humanity. Maintaining the status quo is unwise.

Thank you for this interesting article, David.

Report comment

By the way, my father was arguably the number one MIS specialist in the US banking industry in his hey day. But that was prior to the U.S. government deregulating that industry, and taking away all financial motives for the bankers to behave in a fiscally responsible and ethical manner. Now it appears we have the European bankers, whom our founding fathers warned us about, in charge of the U.S. banking industry. “Too big to fail” thieves … but there are no economies of scale benefits, nor any other benefit to the majority of Americans, to creating banks that are “too big to fail.” And we should all be aware of the reality that “power corrupts, and absolute power corrupts absolutely.”

I will say, pointing out the “dirty little secrets” of today’s unethical bankers requires a lot less research than pointing out the “dirty little secrets” of today’s unethical medical, pharmaceutical, and religious industries. Thank you to all who are working on pointing out the complete fraud that is today’s US psychiatric system.

And, no doubt, psychiatry’s goal of maintaining the current status quo, in many industries, is unwise.

“If the American people ever allow private banks to control the issue of their currency, first by inflation, then by deflation, the banks … will deprive the people of all property until their children wake up homeless on the continent their fathers conquered …. The issuing power should be taken from the banks, and restored to the people, to whom it properly belongs.” Thomas Jefferson (1809).

And read the rest:

http://www.themoneymasters.com/the-money-masters/famous-quotations-on-banking/

We currently have, either stupid people or, criminals in charge of the U.S., playing the same games they did in Germany during WWII. At least we can say it’s obvious today’s 1% lacks respect for the “creative geniuses.” We’re supposed to learn from history’s mistakes, not repeat them.

Report comment

“We currently have, either stupid people or, criminals in charge of the U.S…”

There’s the problem, right there, and it’s a HUGE problem. Trickles down, too, until someone finally stands up to it and refuses to enable that game.

I think more and more people are seeing this, though, and getting sorely fed up with incompetence and corruption that are so obviously real and concrete causes of people feeling distressed, paranoid, and, in general, creating needless suffering for everyone concerned. Here’s to continued awakening…

Report comment

Thanks for your comment, Steve. I believe so-called ‘normative’ research in mental health can function very well as a tool for pressures to conformity. Also, when it’s assumed that so many educational outcomes will result in a bell curve (high, average, low; gifted, average, disabled, etc.), we’re encouraged to assume that we should accept this as the status quo, rather than wonder what we could do to move all students toward the high end and corrupt the entire concept of a curve as an outcome.

Report comment

This is absolutely brilliant! I am a child protection Social Worker (American working in the UK) and have believed for a lot of years that mental health issues were connected to childhood abuse the majority of the time, but for many years there was no research into this or any belief in it until recently with ‘trauma informed therapy’. I do believe everything in our lives and body is connected to how we manage our emotions (and then behaviour) therefore, we always have to be mindful of context, including culture, history, beliefs, experiences and spirituality.

I look forward to reading more…the breakdown of the language is much appreciated.

Report comment

Thanks for your comment, third43. I agree wholeheartedly with you in what you seem to be implying – that trying to understand and help someone with ‘traumatic experiences’ involves holding a deep respect for their subjectivity and life experience. For me, a phenomentalistic, idiographic, and qualitative approach (as described above) has aided me to be of service to other people who’ve requested my assistance much more than the normative, pathologizing, and evaluative stance that currently dominates ‘mental health systems.’

Report comment

You would think that nomothetic frames would suit physical therapies much better. The challenges of creating an understanding of mental health as a metaphor is already way too demanding on the talent pools of the mainstream, though. Anything suitable for converging upon dogma will always bring home the bacon here in Amerika.

Report comment

Hi David. Thanks for this thoughtful and provocative post. With regard to the nomothetic versus idiographic debate, I noticed you didn’t discuss the large and consistent body of research on this issue that would seem to favor statistical over subjective methods of making clinical judgments (e.g., http://www.tc.umn.edu/~pemeehl/167GroveMeehlClinstix.pdf). Will you be covering this in one of the future posts you mentioned? It is a critical issue that cuts to the heart of your position, and you would seem to have your work cut out for you according to the data. I look forward to your future contributions.

Report comment

Hi academic and travailer-vous, Thanks for your comments and the link. Your comments are very different and you got me thinking. So here’s a long comment back.

Who can’t admire the work of Paul Meehl, defender of the nomothetic? While taking the stance he did, Meehl himself did profess admiration for Gordon Allport, patron saint of the idiographic position. Meehl also noted “if nothing is rationally inferable from membership in a class, no empirical prediction is ever possible” (1954). I think he was trying to make a different point, but I agree with his statement more readily than he likely did.

Obviously, many if not all classes or categories researched in social science exhibit a much wider degree of variability in consensual definition and operationalization than phenomena in natural sciences (although there’s certainly controversy there). Yet such categories are routinely ‘reified’ and applied to individual lives as though they have the same level of empirical validity as, say, gravity does in physics.

I have encountered numerous individuals who seem to defy the class definition pertaining to ‘schizoaffective disorder’ yet still have this label affixed to their personhood (and to their detriment). On the other hand, I’ve yet to find any individuals who defy gravity. Nomothetic social science studies researching constructs of the same name emerge from theories that define them very differently yet are frequently collapsed together in review articles to suggest to the rest of us that we now know something about ‘depression’ or ‘anxiety’ or ‘criminality’ or ‘schizoaffective disorder.’ I don’t think it prudent or wise to develop inferences from such an array of varied information and apply it to any single individual. I consider it deceptive at least and oppressive at worst.

I’m philosophically at odds with the claim of (problematic) nomothetic research in telling me anything particularly meaningful about an individual person. This is where I part ways with Paul Meehl and ally with Allport, who said “psychological causation is always personal and never actuarial.” Please understand, however, that I’d also be the last one to defend the predictive validity of ‘clinical judgement’ in comparison to nomothetic prediction. While I’m a critic of the nomothetic approach, I also don’t accept the priest-like omniscience presumed by society for licensed psychologists, psychiatrists, etc.

Just because nomothetic approaches may do better than the subjective (psychic) predictions of professionals, doesn’t mean they come out smelling like roses. I’m reminded of the numerous large frame studies of ‘violence potential’ done in the 60s and 70s using data from thousands of released felons. In one study, 86 percent predicted to be ‘potentially violent’ did not exhibit violence; in another, for every ‘correctly-identified’ felon, there were 326 who had been ‘incorrectly-identified’ as potentially violent; in another, complex multivariate equations still yielded an eight-to-one false-positive ratio.

It’s a common clinical psychology heuristic, therefore, that ‘history of violence’ or ‘history of suicidal acts’ is our best bet for making predictions along such lines. Yet they’re not particularly accurate criteria, just cautious ones, and they signal just how limited predictive conclusions from nomothetic research really are. They are also mostly common sense – “they did it before, and they might do it again.” If I had time, I could have a field day with intelligence and personality tests, by the way.

So I don’t really feel what’s described as the ‘heart of my position’ revolves around critiquing nomothetic approaches by arguing on behalf of subjective clinical judgment. I’d be on shaky ground using the pot to call the kettle black.

Instead, I want to show how clinical psychology and psychiatry are far more mired in philosophical quandaries than they let on and why they deserve to be roundly critiqued, deconstructed, and regulated as they become more global in influence. My aim in future pieces will be to demonstrate the social-historical origins of this evolving applied mental health movement and to expose its oppressive features at a point where they are brought into boldest relief– at the boundaries of cultural difference in Indian Country.

Report comment

I recognize that your reply here mostly addresses academic’s suggestion of narrowness (maybe of circularity, too?) in your analysis. But I appreciated all the clarifications you offered. The principle meaning of my comment is that if you’re planning to stick to native rights perspectives, then I’m not going to be above a few cynical remarks. Of course, I think that the shelving of native rights issues is the other kind of bad, nomothetic cynicism that is expressive of bad faith.

Report comment

Former student from Seattle. I believe the only two professors I learned ANYTHING from were yourself & Dr. Kerr.

This hit me right in the stomach:

” It means that the political and social perpetration of oppression gets obscured, legitimate and understandable reactions are reframed as individual pathology, and mental and emotional affliction directly traceable to the social problems of racism, poverty, displacement, intolerance, war, and hatred are ascribed to deficiencies and impairments of the victims themselves.”

The mind/mechanism that created the concept of “drapetomania” somehow feels analogous to this process.

Looking back at recent history, it seems these tactics have been generalized & used against the entire population. It’s a pattern that repeats itself; whether through the cult of c0vid, the war on (terror, crime, drugs etc) & complete erosion of social cohesion, civil & economic liberty. As a people, we have been systematically shell shocked, dehumanized, propagandized, exploited & corrupted for so long most don’t even notice. Per the Brezhnev doctrine, “the situation has been normalized.”

During Psychopathology 1 (2010) there was a presentation about eugenics. Would love to read/hear your thoughts about how this is playing out now through the foundations, bioethics/population control/sustainable development, the World Economic Forum & big pharma.

Appreciate you

Jeremy

Report comment