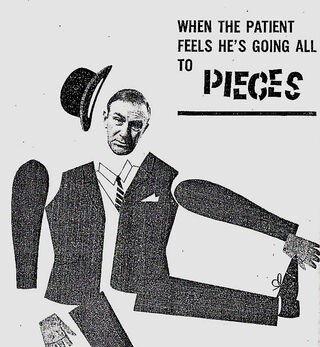

The history of antidepressant withdrawal dates to the first articles on imipramine in the late 1950s, when the newly created tricyclic was introduced to psychiatric hospitals as an “interesting and promising antidepressive.”

More than a half-century later, after well-publicized assurances by academic psychiatry that withdrawal from later-generation SSRI antidepressants is “mild, transient” and “self-resolving,” it is useful to compare discussion of both generations of psychiatric drugs and to focus on shared efforts to deny and minimize their withdrawal syndromes.

This article—part of a series offering a deep dive into the history of antidepressant withdrawal—puts under renewed scrutiny academic and industry claims that SSRIs are broadly “well-tolerated.” From the outset, we’ll see, their record—like that of their earlier counterparts—has in fact been mixed and raised multiple flags.

Within two years of its 1957 launch on both sides of the Atlantic, imipramine was found by one important study by researchers Andersen and Kristiansen to have “led to secondary effects or complications in nearly all cases”—in total, 27 men and 58 women. The study was published in Acta Psychiatrica et Neurologica Scandinavica, a still-prominent European journal of psychiatry. Although it drew favorable conclusions about imipramine, with “mild or medium depressions” described as “cured or ameliorated in 4/5 of the cases” (following 3-6 month treatment), the article provided a systematic appraisal of the drug’s “secondary effects” and “complications,” which were found to recur with a frequency and intensity that conflicts with the study’s ultimate verdict.

The fact that antidepressant withdrawal was flagged at the outset, with serious “withdrawal symptoms … observed in 15 [out of 85] cases”—in this study, 17.6 percent—“lasting about 3 days, both following gradual and acute termination of treatment,” helps underscore why a battle would ensue—much like the one that would be fought over benzodiazepines and minor tranquilizers such as Valium—to downplay and limit study of the short- and longer-term consequences of those harms, including by representing them as a “discontinuation syndrome.” The phrase implies a premature ending of treatment, suggesting a need to reinstate but alter dosage or switch to a different kind of antidepressant.

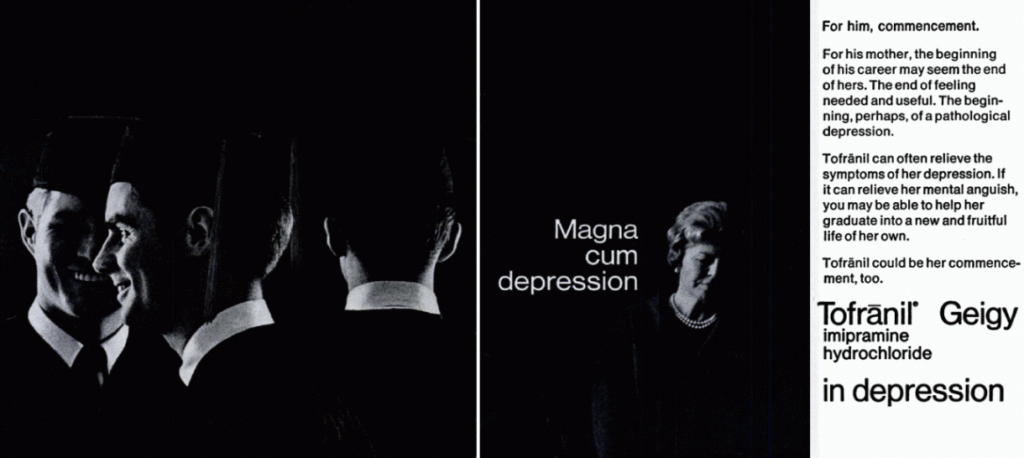

In The Antidepressant Era, David Healy provides a granular account of the way drug-maker Geigy marketed imipramine as Europe’s first antidepressant. Initially known as G22355, the compound was sent to trusted clinicians such as Roland Kuhn, then based at a large (700-patient) state psychiatric hospital in Münsterlingen, northern Switzerland. Healy’s account describes a process fraught with trial and error, with the drug-maker sending the researchers an array of compounds whose “psychotropic effects” turned out to be “indistinct.” With the hospitalized patients in this case unable to provide informed consent, as the drugs’ adverse effects were largely unknown to researchers and manufacturer alike, the randomness of the testing and experimentation with dosage is notable.

First tested by Kuhn as an antipsychotic, G22355 was given to a group of patients diagnosed with schizophrenia. As Healy recounts, “The study design was that those on chlorpromazine were taken off that drug and put on the new one,” presumably without being told about this, to minimize the influence of placebo, “while those newly admitted, who were drug-free, also had G22355 given to them,” likely on identical terms, for the same reason. “It was administered to over three hundred patients with a variety of conditions. Many of the patients previously on chlorpromazine [an antipsychotic] began to deteriorate, becoming increasingly agitated. Some appeared to become hypomanic.”

By 1955, Kuhn had readied a second trial to study the compound’s effects on those diagnosed with depression. Although the study eventually would include 40 patients, Healy describes the responses of the first three as “so dramatic that company scientists, ward nursing staff, and Kuhn [himself] had little doubt that the treatment was effective.” There is a strong suggestion here of a rush to judgment—of enthusiasm at rapid change overwhelming caution about the almost-immediate manifestation of mild-to-serious adverse effects, potentially with longer-term harms. After six days on imipramine, the first of these patients, Paula J.F., was said to be “completely transformed.” A mere 17 days later, long before further patients could be added and properly assessed, Kuhn sent in a drug-study report “strongly endorsing the drug as a potential antidepressant.” He had done so on the basis of 1-3 patients, themselves undergoing treatment for a week and less.

Over the next three years Kuhn went on to treat more than 500 patients with imipramine, and many of them also reported becoming manic on the drug, an adverse effect identified in later studies as well. Nevertheless, Kuhn firmly represented the drug as “an antidepressant but not a euphoriant.” Healy’s broader assessment suggests extensive cherry-picking over preferred outcomes: “He picked out the features of a syndrome that he felt was particularly likely to respond, a state that for some time ha[d] been called endogenous depression, vital depression, or melancholia.”

Kuhn’s reckoning was that pharmacology could serve as a basis for treating neurotic states, in which “depressions” were then broadly included. His perspective was shared by some psychoanalysts willing to use psychotropics and psychostimulants to accelerate talk therapy, on the basis that they might “promot[e] verbalization of repressed and subconscious material,” according to Joseph Davis.

Keenly alert to imipramine’s vast market potential via expanded diagnostic parameters and treatment categories, Kuhn soon pitched his colleagues in biological and psychoanalytic-based psychiatry on both sides of the Atlantic, in language mixing boosterism with periodic but unmistakable signs of messianism:

“An important field of research opens up here, rendered accessible for the first time by the recent development of psychopharmacology, and touching not only problems of psychiatry but also those of general psychology, religion and philosophy,” Healy quotes Kuhn as saying.

The evidence base accruing around these new compounds was, by contrast, far less favorable. Although Andersen and Kristiansen’s earlier-described 1959 study of imipramine-treated depression concluded that “the drug can cure or improve about four-fifths of patients with endogenous depressions,” it dwelled at length on the fact that the drug “led to secondary effects of complications in nearly all cases.”

In addition, imipramine was withdrawn from 2 male and 5 female patients “due to complications”:

One of these patients was a 39-year-old man who had previously been well, apart from slight infections and pneumonia 6 months previously. Prior to treatment he did not manifest symptoms of somatic abnormality, and the EEG was also normal. During the first 5 days of treatment he suffered from universal motor restlessness in the form of unintentional, coarse jerking movements. This disturbance intensified in spite of reduction in the dosage to such an extent that the patient was hardly able to descend the stairs or feed himself. When at its height, there were myoclonia [involuntary muscle spasms] and jerky, throwing movements of the head and extremities. His gait reminded one of ataxia [impaired coordination as when drunk] and on the whole he resembled a case of Huntington’s chorea [due to cognitive and motor-based decline].

The patient’s rapid deterioration from “previously … well” to “hardly able to descend the stairs or feed himself” is striking and alarming, not least with 19 other patients (23.5 percent of the study overall) “manifest[ing] motor disturbances” less severely, representing a tendency to tardive dyskinesia (involuntary twitching and muscle spasms) in one-in-four patients in the study overall. Just as striking regarding the 39-year-old man, “the pattern returned to normal shortly after [imipramine] had been withdrawn,” suggesting with little doubt that the drug was ultimately the cause.

Given their general uninterest in longer-term effects and repeated rhetorical efforts at downplaying the consequences, especially relative to the sheer number of adverse effects reported, Andersen and Kristiansen’s overall extrapolation seems unduly favorable toward the drug. Prescribing doctors were advised: “only a few manifested skin disturbances”; “at least the mania did not appear to become worse during treatment”; and “the patients suffered from various secondary effects, but there were no deaths or lasting complaints.”

1959 turned out to be a watershed year for studies of antidepressant withdrawal. In the same year, A. M. Mann and A. S. Macpherson, researchers at Montreal General Hospital and McGill University, published a study of 70 students (50 female, 20 male) with mild, moderate, or severe depression, 53 of them treated in-hospital and 27 as outpatients. After three weeks, on dosages ranging from 50-300 mg/day, 20 (28.5%) of the patients were assessed as “recovered,” based on criteria established by H. E. Lehmann the previous year, with a further 24 (34.2%) considered “much improved,” while 9 patients (12.5%) were found to be “unimproved” and 4 (5.7%) were assessed as faring “worse.”

Of those, two patients “had to be withdrawn from the drug after only two days’ medication,” because “both stated they felt ‘completely overwhelmed’ by the drug, with very marked dizziness, severe palpitations, jerky movements, and markedly increased tension and agitation on the usual initial dosage scale.” In another case, “a toxic psychosis with hallucinations and delusions was seen … after one week of administration of the drug. This subsided on discontinuation of the drug.”

Overall, they concluded: “We feel the problem of side effects to be one which merits serious consideration.” So frequent were the side effects of one type or another, they worried, that “fewer than 10% of patients were completely free of them.”

In my next post on this topic, we’ll see significant parallels arise between the 1950s and 1980s, as SSRI antidepressants are introduced and similar withdrawal issues emerge.

“More than a half-century later, after well-publicized assurances by academic psychiatry that withdrawal from later-generation SSRI antidepressants is ‘mild, transient’ and ‘self-resolving,’ it is useful to compare discussion of both generations of psychiatric drugs and to focus on shared efforts to deny and minimize their withdrawal syndromes.”

Indeed. The doctors believe the adverse and withdrawal effects of the antidepressants are “bipolar,” thus they’ve misdiagnosed millions of people, resulting in an iatrogenic “bipolar epidemic.”

https://www.alternet.org/2010/04/are_prozac_and_other_psychiatric_drugs_causing_the_astonishing_rise_of_mental_illness_in_america/

It’s a shame none of the doctors were intelligent enough to read their DSM-IV-TR, which clearly states:

“Note: Manic-like episodes that are clearly caused by somatic antidepressant treatment (e.g., medication, electroconvulsive therapy, light therapy) should not count toward a diagnosis of Bipolar I Disorder.”

And it’s even more of a shame that the psychiatrists took that disclaimer out of the DSM5, instead of adding the ADHD drugs to that disclaimer. As should have been done, if the psychiatrists had any ethics whatsoever.

As to “withdrawal from later-generation SSRI antidepressants is ‘mild, transient’ and ‘self-resolving,'” this is absolutely untrue. Since I’ve had ‘brain zaps,’ a well known antidepressant withdrawal symptom, for 20 years and counting.

As one who has many Northwestern graduates in my family, I’m curious whether they’ve stopped teaching the DSM at Northwestern yet? And please do share with the Northwestern psychiatrists my medical research finding that the neuroleptics/antipsychotics can create both the negative and positive symptoms of “schizophrenia.” The negative symptoms can be created via neuroleptic induced deficit syndrome, and the positive symptoms can be created via anticholinergic toxidrome.

Meaning that both “bipolar” and “schizophrenia” are iatrogenic illnesses, created with the psychiatric drugs. I do so hope to see an end to psychiatry’s modern day, staggering in scope, holocaust soon.

https://www.nimh.nih.gov/about/directors/thomas-insel/blog/2015/mortality-and-mental-disorders.shtml

It’s so sad they’re killing “8 million” innocent people, mostly child abuse survivors, not “dangerous” people, every year.

https://www.indybay.org/newsitems/2019/01/23/18820633.php?fbclid=IwAR2-cgZPcEvbz7yFqMuUwneIuaqGleGiOzackY4N2sPeVXolwmEga5iKxdo

https://www.madinamerica.com/2016/04/heal-for-life/

I’m quite certain our country would be a much better place, if we arrested and convicted those who abuse children, rather than DSM defaming and neurotoxic poisoning survivors of abuse, and their legitimately concerned family members, en mass.

Report comment

“His perspective was shared by some psychoanalysts willing to use psychotropics and psychostimulants to accelerate talk therapy, on the basis that they might “promot[e] verbalization of repressed and subconscious material,””

Same as ‘spiking’ a suspect with benzos and then putting a gun to their head and telling them your going to execute them? They start to verbalise the repressed and subconscious confession you need fairly quickly. Any complaints about the ‘spiking’ gets called a “hallucination” and becomes a paranoid delusion that requires further ‘treatment’. Do three or four and show that the ‘treatment’ works and it can be rolled out in no time. Don’t ya love medicine 🙂

And the really good news is, no one dares to look at the proof.

Report comment

Yes, and thanks for your comment. I wanted this put in — hopefully with a clear irony light attached to those quote marks — because, unfortunately, it’s still quite common to hear psychotherapists and psychoanalytically-oriented psychiatrists talk of SSRIs (and psychiatric drugs more generally) as somehow “kickstarting” the therapy and “accelerating” it through a combination treatment. The notion remains widespread despite, as you note, the mounting evidence of serious adverse effects and withdrawal syndrome from the drugs themselves, which obviously would impede a productive therapy and should be increasing caution, but that doesn’t appear to be getting through to many prescribers. Worrying and frustrating.

Report comment

Yeah, there was some study way back that claimed that “combined therapy was better than either medication or therapy alone.” It became some sort of mantra such that any challenge to it was met with derision, at least in the circles I was traveling in at the time, even though many future studies showed no such thing. It’s one of those myths like the “broken brain” myth that has little to no support, and yet persists like a bad case of poison oak.

Report comment

Yes Steve, it still exists, I mean the propaganda. Most People don’t have discerning brains. Some go on to develop the matter of discernment. Most people like to be told as to what is true and new. Ohh they say, a new “scientific finding” And they gobble like turkeys, spit out like parrots. No effort by the grey cells, nada.

Report comment

Hey Steve –

When I talk about 3 weeks – I’m only talking about 10% tapers.

EACH 10% taper takes about 3 weeks to adjust, and for symptoms to resolve. (and yes, it can take longer – but never shorter)

IF you Cold Turkey – you could be talking years, for all of these 3 week neurotransmitter adjustments to take place. They “stack up” and all your dysregulated systems have to try and right the ship before it topples. In Surviving Antidepressants, we call it “Humpty falls off the wall.”

The 3 weeks seems to be carved in stone, however, whether you’ve been on the drugs for 1 year or 10 – that there is an adjustment after 3 weeks of chemical change. This may be true of other neurotransmitter affecting drugs, like alcohol, tobacco, etc. It seems to be true of all psych drugs, whether “antidepressant,” benzo, neuroleptic, or “mood stabiliser.”

You cannot heal a broken leg faster than you can. Likewise, when your brain has upregulated or downregulated to a drug OR A DOSE (tapering) – it takes at least 3 weeks to recover from that change.

This may be why the label literature speaks of “resolved in 2-4 weeks” = but we all know that is a lie for at least 50-80% of people who have taken these drugs.

Report comment

That makes sense. Of course, the 2-4 weeks statement is not based on any kind of research. It’s either wishful thinking or outright disinformation.

Report comment

Another myth: You need to take the drugs for 4-6 weeks to see the benefits.

According to the studies used to claim the drugs are effective the drugs benefit decreases over time.

Now we know the reason the drug has benefits in these studies at the beginning is because the study design is to put the “placebo” group through withdrawal from the same drugs.

The 4-6 weeks myth in practice gets people addicted to the drugs even if they don’t feel any benefit. Then if they try to quit they go through withdrawal and are told it’s proof the drugs are good.

Report comment

Wow, I never thought of it that way, but that really does make sense! I don’t suppose anyone could be convinced to study that point, though. The conclusions might cost people too much money and status!

Report comment

Of course Willow. But you know when you type this stuff, it is helpful for them to develop more “reasoning” 🙂 Although I’m sure they practice “scenarios” a LOT. I’m most certain they use the smartest shrink to come up with arguments and then all the ones holding the rubber duck develop counterarguments until one seems suitable for everyone shrink to use as arsenal for their subjects. And of course the media.

Report comment

Actually, as evidenced in tapering and withdrawal – the neurotransmitters do take about 3 weeks to upregulate or downregulate from chemical intervention.

Somewhere on SurvivingAntidepressants.org is a study which led our founder in establishing our protocols. It really does seem to be true.

So – taking the drug – it takes 3 weeks to upregulate and adjust to the chemical intrusion. And withdrawing from the drug – it takes 3 weeks after each adjustment. (SurvivingAntidepressants recommends 4 weeks between tapers, so that these adjustments don’t stack up and throw your system into chaos. This gives a week buffer for symptoms to settle.)

This is why “med changes” – especially the “cold switch” = are hell.

It’s similar to the way that a broken leg cannot knit any faster than 6 weeks…it takes at least 3 weeks for neurotransmitters to adjust to these chemical changes.

Yes, it is convenient for drug companies that this is enough time to be hooked on the drug – but that is not the only factor at play here.

Report comment

This is only true for short-term usage. It can take much, much longer the longer you have been using the drug, according to well-researched drug abuse studies, which are completely analogous. In fact, with really long usage, no one really knows if the brain ever fully recovers.

Report comment

Wouldn’t it be logical to assume taking drugs that cause cognitive impairment would make talk therapy less effective?

A study did find that talk therapy was less effective for those taking the drugs.

Basically what the mental health industry did-which is what they almost always do- was fabricate a fact free reason why the drugs help.

Some therapists probably confuse them wasting time pushing drugs on people who do not want them as evidence taking the drugs improves therapy. When you take the drugs the therapist doesn’t have to push them on you and something else can be talked about.

https://www.madinamerica.com/2019/11/psychotherapy-less-effective-people-poverty-antidepressants/

Report comment

Thankyou Christopher.

‘There are no “side effects”, only effects. People’s fears and desperations are absolutely not

a reason to conjure up chemicals in a lab and call them “medications” and “side effects”

The most horrific practice ever, was to tamper with people’s brains and chemicals. It should be

completely outlawed.

There is an asinine question that persists. “well what do we do with those people” As if keeping

a horribly gone wrong paradigm and practice is the only choice there is.

Any defender of the “MI” system ALWAYS points to someone who had a “psychosis”. ALWAYS.

They use that which looks weird to people. They still revert to examples of “others”, and that is where the listener does not have enough experience or grey matter to use discernment to further analize what exactly is going on.

Report comment

Thanks for your comment, Sam. Yes, regrettably, “psychosis” almost always is invoked as a validating foundation, even (or especially) when it is not. Of particular interest to me there is that DSM-II in 1968 began the process of deleting earlier references to psychical states as “reactions” and turned them instead into “disorders,” a move consolidated by DSM-III (1980), so we lost situational and social-environmental stressors almost at the stroke of a pen. That’s also one explanation, incidentally, for how the “biological model” and ontologized brain states became predominant, particularly in the U.S. in those decades and really ever since.

Report comment

Indeed, by the “stroke of a pen”.

It is interesting that they used psychological torture successfully on prisoners. So we then have to assess if his reactions is a disorder or illness. After all, his reactions look like stress, confusion, despair. And I am absolutely certain that his brain would be lighting up all over the place.

So would it then be reasonable to put him on psych drugs? Now perhaps if the torture was continued decades, one would have a destroyed prisoner. But the drugs would not make him better, nor would they help him. the best way to “treat” him would be to provide a safe place for him, to try and help him regain trust, which of course can take time.

Now we just have to wait another 50 years to see what they pen next.

Report comment

“so we lost situational and social-environmental stressors almost at the stroke of a pen. That’s also one explanation, incidentally, for how the “biological model” and ontologized brain states became predominant, particularly in the U.S. in those decades and really ever since.”

It also explains how the Inquisitors came to believe that witches were made of wood. They subject you to acts of torture, and call your response a mental illness, and then refer you to the people who tortured you. If people were only aware that the images from Abu Ghraib could be obtained in our local ‘hospitals’ would they still consider the ‘patients’ ill? I’ve seen worse done to ‘patients’ here, though it is the case that Mr Obama decided not to release ALL the photos of the types of ‘treatments’ available.

Garth Daniels might give you a clue.

Report comment

It’s odd because if you present people with dozens of studies find the drugs worsen the very symptoms they are said to help also cause a bunch of new physical and mental illness the response is “what else are we to do?”

The idea doesn’t cross people’s minds that maybe not taking drugs that worsen the “illness” and cause other illnesses is a better course of action than spending $5,000+ dollars a year poisoning people.

Report comment

It seems there is some idea that someone has to “do something” about people feeling bad, instead of just being there and allowing people to feel whatever they feel.

Report comment

Exactly Willow and Steve.

Report comment

Muchas gracias por la revisión historica de estos primeros estudios sobre Imipramina. En ella se aprecia la aparició de múltiples efectos secundarios, algunos graves, pero no hablan, en realidad, de un síndrome de abstinencia, que estoy seguro que existe con estos fármacos. Sin embargo no encuentro bibliografía que apoye esta observación clínica. Convendría conseguir que estos estudios se realicen de manera exahustiva. Gracias de nuevo.

Report comment

Gracias por su comentario, Victor. Sí, esos estudios deberían hacerse de forma exhaustiva—sistemática—y, lamentablemente, no se han hecho, incluso porque las compañías farmacéuticas no han querido que los efectos de sus fármacos se estudien de esa forma. Por supuesto, todavía estamos lidiando con las consecuencias de su negligencia. Es muy frustrante.

Report comment